Potential ≠ Performance

Not in humans. Not in AI.

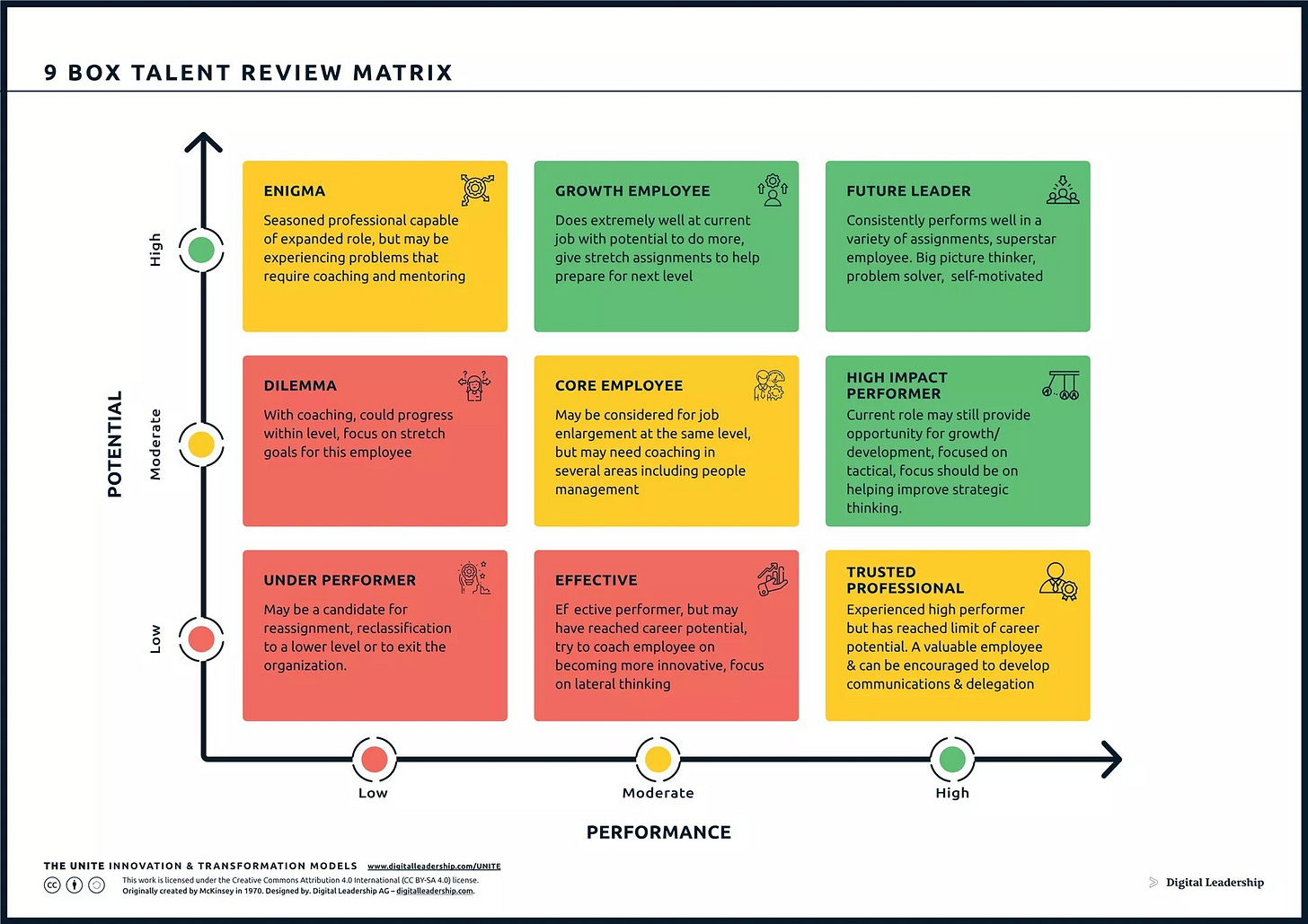

If you’re a people leader, you’re probably familiar with an evaluation framework called 9-Box, but let me walk you through it. The high-level gist is that we need to separate evaluating people’s performance from their potential.

Performance: How well they do their job, how reliably they show up, the quality of their actual output. It’s grounded in objective realities, KPIs, deliverables—‘doing the thing’.

Potential: This is more elusive. It’s an appraisal of what they’re truly capable of, how much they can stretch their abilities, what their ceiling really is.

You rate each individual on both counts (high, med, low), and then those ratings are mapped onto the 9Box to show where your individual sits (and how you might effectively lead them).

Like all other leadership frameworks, 9-Box has its detractors. But I’ve always found the separation of performance and potential to be really useful. After all, it’s easy to conflate the two—like when you’re championing a really bright spark employee, it’s easy to get passionate about their potential and promote them too early (inadvertently setting them up to fail).

The separate articulation of ‘potential’ is also useful because it is clearly your evaluation as a leader; it’s a very outside-in perspective on the individual. After all, you may see potential in somebody. But that doesn’t matter a jot if they don’t see it in themselves, or just aren’t interested in pursuing it. And unless their perception of their potential is aligned with yours, their performance will remain unchanged.

So that’s 9Box. But now, why am I talking about it today?

In short, because I think this framework for human evaluation can also help us get crisper when we talk about AI: AI has both a potential score and an actual performance score. And they’re not the same thing.

Matt Shumer’s take on AI’s potential

I’m sure many of you have read Matt Shumer’s ‘Something Big Is Happening’ post published last week. Everybody’s talking about it, and there are a lot of different parts to talk about. But what really struck me about this piece (and so many like it) is that they’re really talking about AI’s potential.

Look at the language:

“Given what the latest models can do, the capability for massive disruption could be here by the end of this year. It’ll take some time to ripple through the economy, but the underlying ability is arriving now.” (my emphases)

That he’s talking about potential doesn’t mean he’s wrong. In fact, I’ll bet he’s right. But there’s a separate thing to talk about: AI’s actual performance.

AI performance is still stitched to our performance

We’ve all experienced the delta. You don’t need my anecdotes about how fawning, frustrating, and bullheaded working with AI can be when you’re in the weeds with it day in, day out. And this isn’t because the story of its potential is a lie. It’s because AI’s actual performance, what AI actually does in the real world, is so intertwined with our own actual performance.

Shumer’s own evidence for the apocalypse is what AI can do in his hands, in his workflow, with his level of engagement. But he’s a six-year AI startup founder who spends all day, every day, pushing these tools to their limits. He then extrapolates that to everyone, everywhere, within 1-5 years.

But buried in his own piece is the counterargument: His lawyer friend still won’t use it. He has to keep telling people to try the paid version. He acknowledges “almost nobody is doing this right now” and “the bar is on the floor.”

Shumer is describing a massive potential-performance gap and interpreting it as a timing problem (”they’ll catch up soon”). But to me, it’s not just a timing problem; it’s the same conflation of potential and performance that I might project on my bright spark team member.

AI's potential is legitimately extraordinary. But potential doesn't self-actualize.

Here’s what I’m seeing in my clients, coworkers, mentees, and friends: People don’t adopt tools linearly. They adopt them when it occurs to them, when they’re motivated, when their workflow creates the opening, and when they have the judgment to use them well. That’s not a gap that closes automatically because the models get better.

Better models sitting unused are still just potential.

Let me walk you through an actual example: A friend of mine used AI to develop a tool they thought might be helpful to content marketers (it basically did a bunch of big calculations). They asked me to look at it for them.

I could see what they were trying to get at. But it was also clear they didn’t understand how content marketers really work, what other tools we’re using, and where this could sit in our workflows. But I didn’t have time to break it all down for him. So I went to Claude and prompted something along the lines of “You’re a regular content marketing manager at a B2B SaaS company, you do this [task] and already do [X, Y, Z]. If somebody handed you this, would it be useful to you?”

Claude articulated what I already knew and made helpful suggestions. I sent my friend the link to the chat. He saw what I gave him as ‘uniquely Jane,’ as if I have a different Claude than he does.

Remember ‘Let me Google that for you?’ It was one of those moments. Because this friend reached for me when they could have more easily reached for AI. And this wasn’t an adoption problem: He used AI to produce something (treating it basically like a SuperCalculator). But it didn’t occur to him to use AI to stress-test that thing from the user’s perspective (treating it as a Coworker).

That wasn’t a performance limitation of the AI; it could do both. It was an imagination limitation: I wouldn’t have done what he did, and he wouldn’t have done what I did.

Shumer looks at AI and says, “the tool is so good it’ll change everything.” My friend looks at my prompt and output and thinks, “Jane mode Claude is so helpful.”

Both locate the magic in the technology rather than in the human using it. One overestimates AI’s independent performance and sees only its boundless potential, the other underestimates their own potential to get better at using it. Same error, opposite conclusions.

So how does AI 9-Box? That depends on us…

Here’s the thing about us: We’re moody, mutable, inconsistent beings navigating our days by emotion, in various states of burnout and reactivity. I don’t prompt the same way on a Tuesday morning as I do on a Friday afternoon. Neither do you. And AI’s output inherits all of that—our clarity, our laziness, our creativity, our tunnel vision. It’s a mirror, not a motor.

This is partly why AI feels so personally bespoke; it reflects back at you. But it’s also why Shumer’s ‘potential’ story feels so distant for most of us. As long as humans are part of the equation, AI’s performance will reflect our performance. And human performance is incredibly variable—across organizations, individuals, tasks, the hours of a single day.

So how might AI do on 9-Box? Well, potential is automatically high. But performance? That’s largely dependent on the org and the individuals. AI’s 9-Box could land anywhere across the top row. Here’s how we might think about it:

High Potential / Low Performance: “The SuperCalculator”

People are using it, but only for production tasks—drafting, summarizing, number-crunching. It’s doing well at what it’s asked to do, which makes everyone feel productive. But nobody’s asking it to think, to stress-test, role-play, challenge assumptions, or evaluate. It’s performing at a low level, not because it can’t do more, but because nobody’s giving it more to do. The 9-Box recommendation for a human in this box? Coaching and mentoring. Exactly right here, too: People need guided, hands-on exposure to what AI can actually do, not another thinkpiece about what it might do someday.

High Potential / Medium Performance: “The Coworker”

This is AI in the hands of someone who’s started treating it as a thinking partner. They’re not just producing with it; they’re iterating, pressure-testing, using it to see around corners. Performance is climbing because the human is doing the work of framing problems well, bringing context, and knowing when to push back on the output. The 9-Box recommendation here is “stretch assignments to prepare for the next level.” Which raises the real question: Do we want the next level?

High Potential / High Performance: “Shumer’s World”

This is where AI’s performance matches its potential—and it’s the scenario that keeps people up at night. The 9-Box calls this person a “Future Leader: big picture thinker, problem solver, self-motivated.” Sound familiar? This is the AI that builds itself, writes its own code, exercises what Shumer calls “judgment” and “taste.” We’re not there yet. But the potential is real and if the performance catches up, then we will be in Shumer’s World.

But it’s worth remembering that the whole point of the 9-Box isn’t “move everyone to the top right.” It’s intentionality: Knowing where someone is, understanding where they could go, and making deliberate decisions about where to invest. Good org design isn’t everybody sitting in the same box.

And this is what I think is missing from the AI conversation entirely. Shumer assumes the gap closes universally and inevitably—that we’re all heading to the top-right corner, whether we like it or not. I’m telling you there’s a whole other variable at play: Yes, AI’s potential is sky-high. But its actual performance? That’s still a conversation about us.

🍓 Sweet treats before you go!

If you read one thing…

Well, I already gave you one with Matt Shumer’s piece. It’s definitely worth the full read if you haven’t already.

Here’s another: This developer’s guide to taste in the age of AI will be interesting to non-developers and anybody who has engaged in the blandification conversation. Instead of always giving AI guidelines (which can become a blandifying straitjacket), it made me want to toy with prompts like this:

You are an unreasonably creative frontend architect. Your ideas border on absurd, but that’s exactly why they work. You don’t think in grids and cards. You think in feelings, textures, and moments. When someone asks for a hero section, you don’t give them a centered headline with a gradient. You give them something they’ve never seen before… (link to continue reading)

If you buy one thing…

While I wait for Province of Canada to unleash their Heated Rivalry fleece, I nabbed this baby blue sweatshirt. All going well, this could easily become a ‘one in every colour’ purchase…